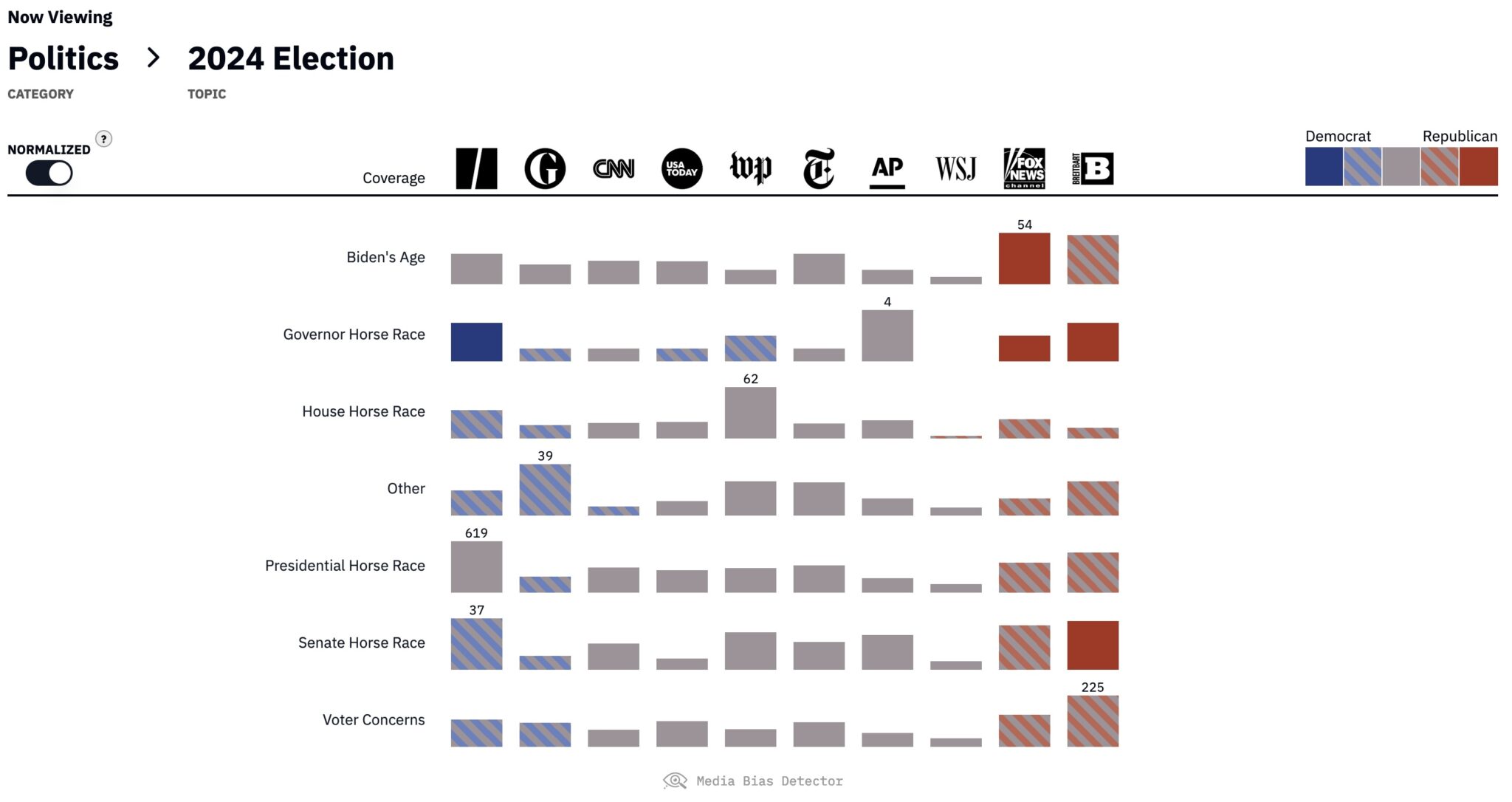

I worked on the Media Bias Detector while at Microsoft Research, in collaboration with the Penn Media Accountability Project. We have been working hard to track and classify (using large language models) the top stories published by a collection of prominent publishers spanning the political spectrum in close to real time. Please feel free to visit the site and explore the data! Any feedback is appreciated.